Blog

Cross-phase

Feedback

•motivation

Building a feedback culture: the role of motivation

What shapes pupils' willingness to act on feedback?

Bradford Research School

—

John Hern, Evidence Lead for Dixons Academies, gets the measure of the measure

Share on:

by Bradford Research School

on the

A common measure that we often encounter in educational research is “effect size.” To understand and interpret evidence, it was clear I needed to grasp the basics of effect size and I thought I’d quickly share my initial journey with you.

Where to start? Wikipedia, of course, and the first thing I realised is that effect size comes in all shapes and sizes. Wikipedia claims that “50 to 100 different measures of effect size are known”. In this blog I’ll try to describe “Cohen’s d” effect size because it helps us to interpret the results of Kraft et al’s meta-analysis of coaching impact, which is featured in Stephen Garvey’s Dixons OpenSource video “Coaching Part 1 – Coaching principles.”

So, focusing my enquiry on Cohen’s d, why would a study choose to report an effect size? Effect size has the potential to suggest if an intervention has resulted in an improved performance or not. That might be the performance of the teacher measured by classroom observation or it might be the performance of their students in standardised assessments. While t‑tests, correlation coefficients and p‑values have the potential to inform us if an intervention is likely to have a real effect or if the outcome is likely to be due to chance, effect size can estimate how large the difference is between groups, especially with larger groups or in a meta-analysis.

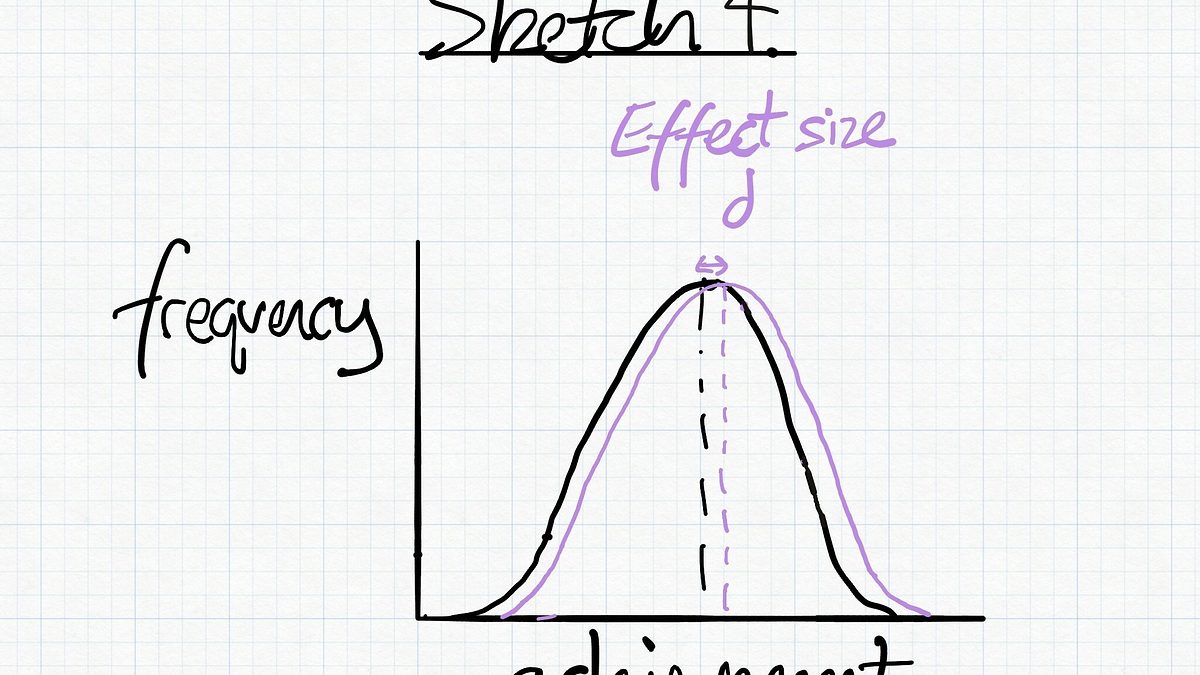

Effect size appears to be well suited to bell-shaped distributions, such as those you might see from measures of skills, knowledge or achievement. In a large enough group of people, such as students, you might expect a range of outcomes (see rough sketch 1*).

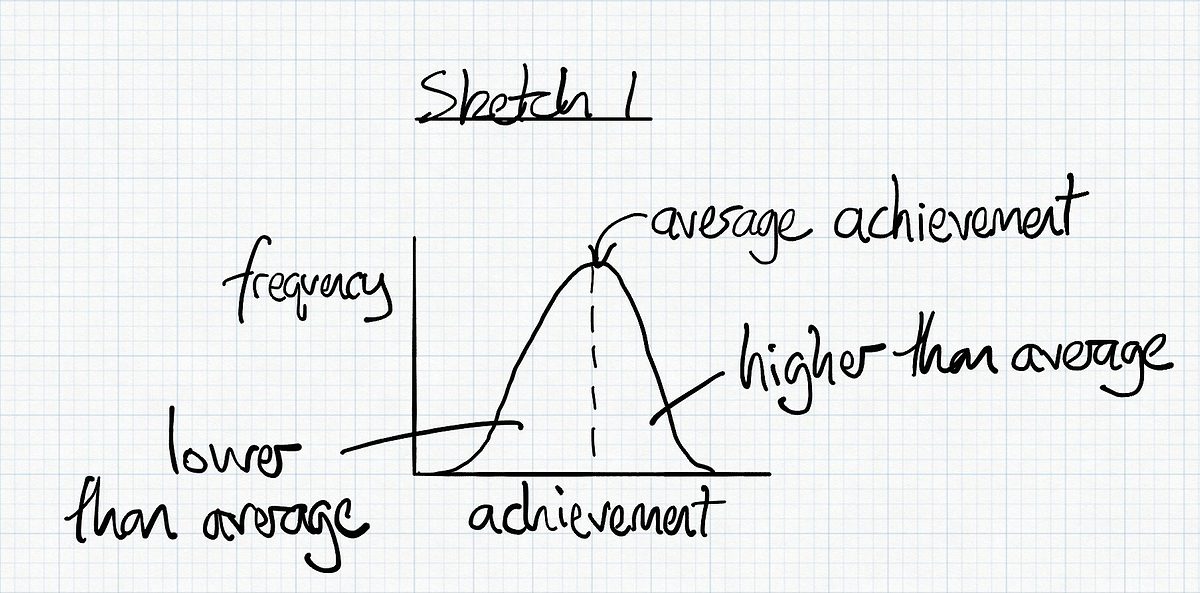

One way of measuring effect size is look at the difference between two groups, one group with the intervention and one group without. So if you had two similar groups where one group had received an intervention that improved their performance it might look like sketch 2.

Here the effect size, d, is a measure of how different the mean is between two groups. For Cohen’s d, this can be calculated in simple examples by dividing the difference in the mean between two groups by the standard deviation of the groups.

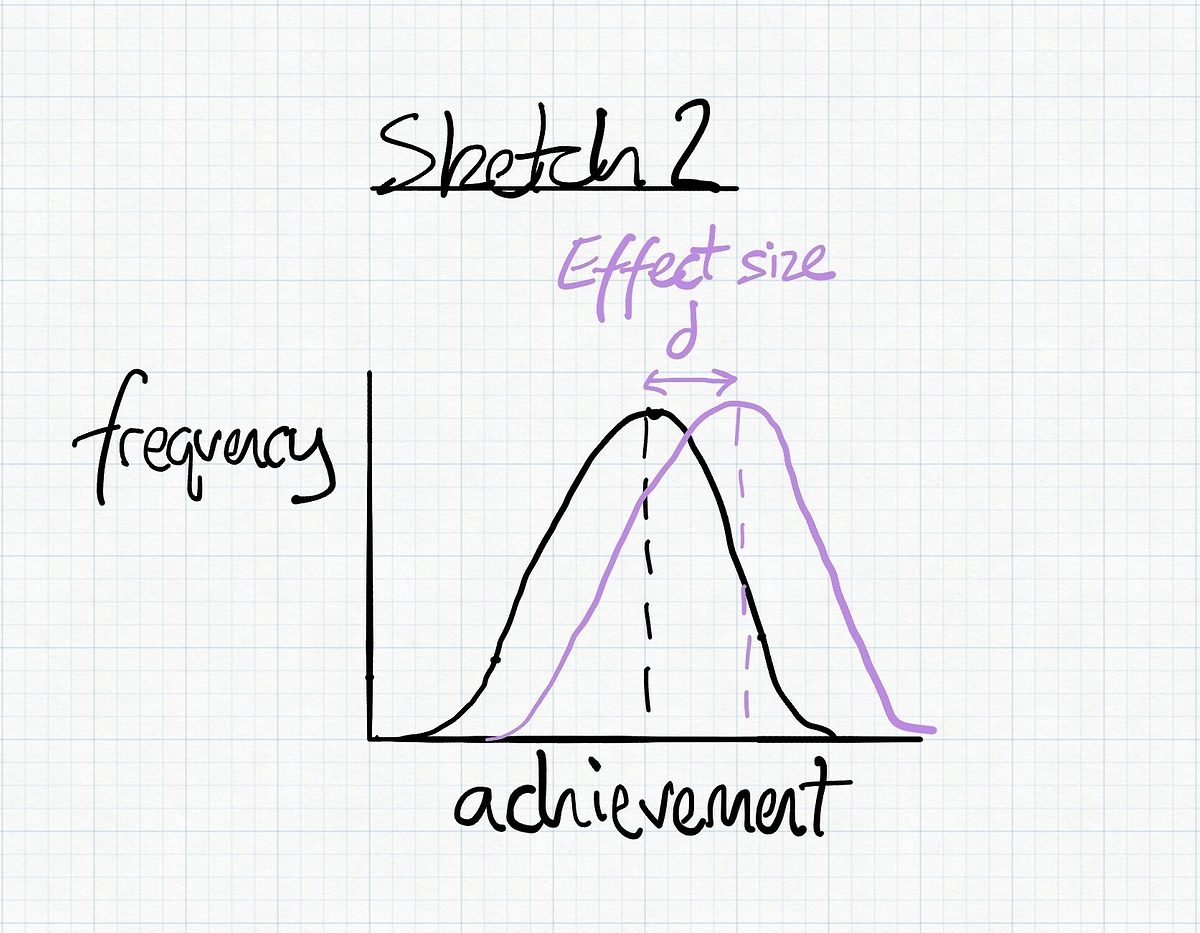

Cohen’s d is measured in units of standard deviation (SD). In a normal distribution, such as the sketches in this blog, we can expect 68% of our values to lie within plus-or-minus 1 standard deviation of the mean (sketch 3).

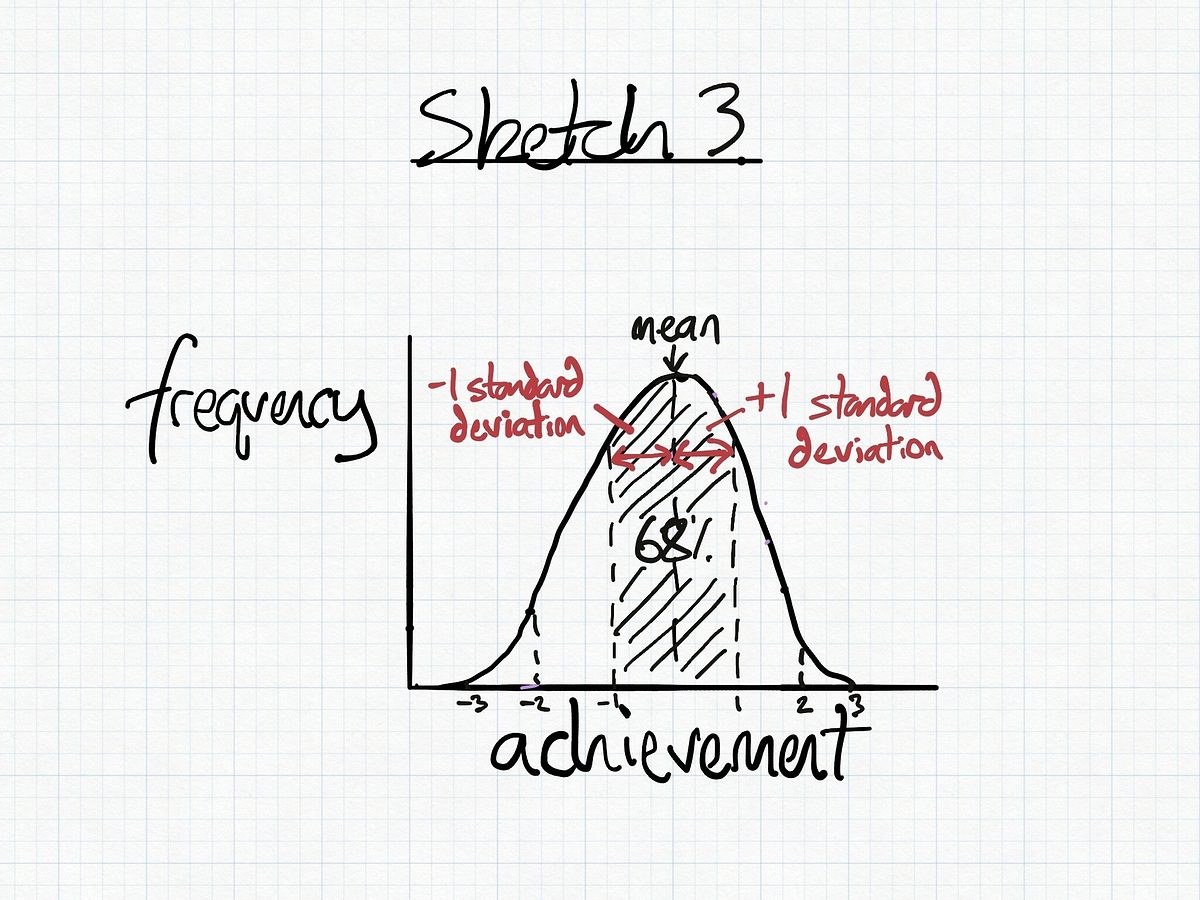

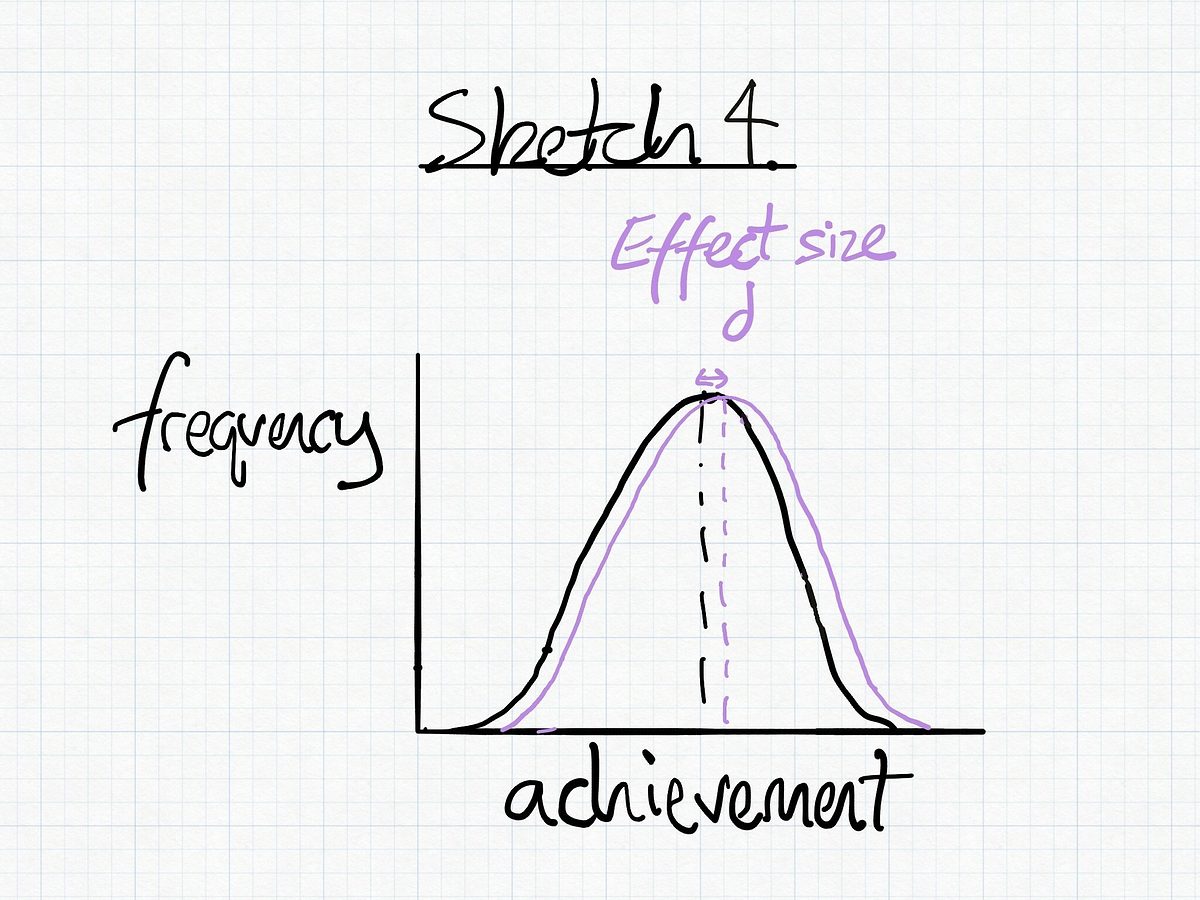

Measuring effect size using Cohen’s d can generate a result for what may be a relatively small change in mean, such as those we see in the coaching interventions pooled in Kraft et al’s meta-analysis. Pooling results from 31 studies, they report that coaching increased student performance in standardised tests by an effect size of 0.18 standard deviations. In an embarrassingly poor sketch, it might look something like sketch 4.

What could this improvement in achievement look like in a UK school? Let’s try an incredibly rough back-of-the-envelope calculation for an imaginary average secondary school. Let’s imagine this school achieved a plausibly-average attainment 8 score of 47 (with a standard deviation 12) with a cohort of 100 students. If instead, that cohort had received an intervention which resulted in a Cohen’s d effect size of 0.18 then the attainment 8 score would improve to 49. On average, all students will have an improvement of 1 in two of their grades (or an improvement of 2 in one of their grades)!! Using the EEF’s “Month’s Progress measure” this would represent 2 months additional months progress. (For more about the EEF’s impact measures, read the Toolkit guide here.)

Looking at sketch 4 above, the effect of changes or interventions in schools may not be very easy to notice in everyday life. If you can see in the small dataset of a single cohort that an intervention has clearly had a positive effect then you’ve probably already noticed it, without having to calculate the effect size. It strikes me that a real power of measuring effect size is that it might show if a change results in an improvement, even if that improvement on average is small . A small improvement in outcome for many students, such as across a whole multi-academy trust, may produce a big improvement overall.

If you’ve had the patience to stay with me and read this far then thank-you (hi Mum!), and I hope some found this a useful introduction to one way of representing effect size. Feedback is a gift and if you have the time to share your thoughts on the above with us on Twitter then that would be most kindly appreciated.

* The rough sketches are meant to represent a symmetrical bell-shaped curve. Any deviation from this is merely the product of my inadequate quick sketching.

John Hern teaches at Dixons McMillan Academy. He is also an Evidence Lead for Dixons Academies Trust, focusing on the evidence around coaching.

References

Kraft, M.A., Blazar, D. and Hogan, D., 2018. The effect of teacher coaching on instruction and achievement: A meta-analysis of the causal evidence. Review of educational research, 88(4), pp.547 – 588.

This website collects a number of cookies from its users for improving your overall experience of the site.Read more